AV Is More Than the Sum of Its Parts

There’s a very popular ride at a theme park here in California that has an infamous history. The ride technology itself is based on a motion platform coupled with a screen. Riders enter, sit down and buckle up. Then the screen comes alive by mimicking a windshield as the video plays, creating a high-speed adventure. The motion platform tilts forward, back and side to side to create the sensation of movement through the levels of G-force on the body.

There was a problem, though. When the ride first opened, a lot of riders were getting physically ill.

On paper, that didn’t make sense. The G-force created was not unlike those in other motion platform rides. The video experience was also very similar in frame rate and speed to other screen-based rides.

So what was the issue? The secret is in our brains. Human brains are amazing at parallel processing (doing lots of things at once) and are constantly processing signals from all of our senses (sight, sound, feel, smell and taste). In the motion-based ride, the brain was very focused on visual and kinetic input and was processing them simultaneously. The problem? They didn’t match up properly and that caused the body to become ill.

The screen (visual) made it look like you were going 90 miles an hour, but the G-force exerted on the body (kinetic/feeling) felt like you were going 50 miles an hour. This threw the brain into conflict and generated a physiological response in the riders. They adjusted this and the ride was instantly better. The point is that just stacking sensory experiences to create depth doesn’t automatically create a better experience and can actually have unintended consequences if not well thought out.

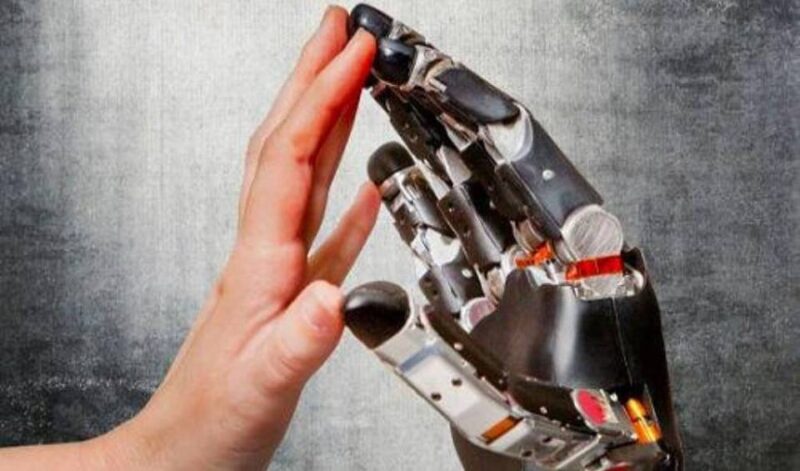

Now, a lot of you are thinking that this is interesting but not relevant to your world as you build commercial AV systems and not theme park rides. Not so fast. We are now entering Web 3.0 with metaverse applications, XR and other immersive, experiential environments. There are already multiple studies going on with regards to XR and the body’s vestibular sense and its reactionary vection (the system and effects that can create motion sickness).

Making people feel sick is obviously a non-starter, but beyond that, the senses also need to be in tandem to create real immersion. If you’re building something like an immersive LED experience and you want to add audio, utilizing stereo or distributed audio systems will not deliver the desired impact. It will actually deter audiences from being fully immersed. Instead, in order to “match up” the sensory inputs, you’ll want to be able to create 3D soundscapes and immersive audio so that sounds and effects come from the areas where the visual input is also coming from.

This not only requires signal processing, amplification and speakers, but also customized content and processing and potentially hardware to generate sounds from very specific places in the room. Even if you’re not in a fully immersive application, matching the visual and audio scapes can be important.

Think of something like Microsoft Front Row for Teams, where the participants are all represented in a horizontal row at the bottom of the screen. If you’re looking at someone on the left side, you should hear audio coming from that location. The sound should come from the position of the visual focus, as otherwise there will always be a disconnect in the brain on the source (your visual attention is straight ahead but your auditory attention is focused above you). It may not make you vomit, but it will cause fatigue over time. Just like we have beam-forming microphones, we should be thinking about deploying processing and multi-element speakers to create the right alignment of eyes and ears.

In the past, when virtual and remote were a small part of the way we worked, maybe these types of features and systems could be argued as luxuries. But in the future of work, if we truly want to blur the lines between the virtual and physical, we have to not only feed the senses but do so in a way that parallels the way we perceive things in real life. If not, there will always be a potentially jarring difference between the virtual and the physical, the online (URL) and the actual (IRL) experiences.