Where we’re going, we don’t need codes

Are you one of the lucky individuals who scored a Kinect on Black Friday or maybe you already had one? Either way, if you have the XBOX 360 +Kinect, go turn it on and update the to the new dashboard, go ahead, I’ll wait.

Are you one of the lucky individuals who scored a Kinect on Black Friday or maybe you already had one? Either way, if you have the XBOX 360 +Kinect, go turn it on and update the to the new dashboard, go ahead, I’ll wait.

Last night the new dashboard update went live to all users and, well, it’s awesome.

Last month I talked about gesture and voice controls, and now, aside from the Kinect hacks already out there, Microsoft has done their own tweaks and incorporated a lot of that into its new dashboard.

Last night I was at a friend’s house, turned on the old box, and behold a new update was ready. So, naturally without reading of course, update we did. I remember hearing about the update earlier during my morningcaffeination session (where I tend to read and research) and I was wondering why it didn’t happen earlier in the AM. For whatever reason it was delayed.

Anyway, first thing I did was ask it to make me a sandwich, sadly, no sandwich was had. Then, my buddy told me to shut up as he waved his hand towards the Kinect motion bar. It recognized him, and after he activated the voice controls, he was able to dig down into the menus via voice and change pages with a flick of the wrist and a swipe of the hand. As I sat there in awe, I wondered how long until we will be controlling other devices in the home with motion and voice.

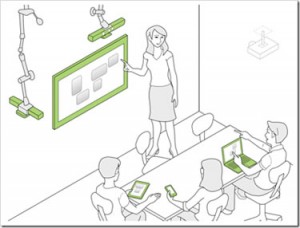

Recently Microsoft announced what they are referring to as Code Space, “a system that contributes touch + air gesture hybrid interactions to support co-located, small group developer meetings by democratizing access, control, and sharing of information across multiple personal devices and public displays.”

After reading about this new awesome tech, I figured that it’s still pretty far out and away from ever becoming a reality. The latest rumors about Microsoft’s newest XBOX in the works will have a new Kinect that will be able to read lips and track fingers. Well if you just watched the video, you have already seen it in action. The future is here, now. Let’s get this b*%$! up to 88MPH and go back to the, um, you know.

I can see Code Space being a big deal on the commercial side of AV and automation and then eventually in residential, but not anytime soon (by the time we get this in homes, we will all be like the humans in WALL-E, floating around aimlessly, with snorkels on so we can breathe as we are molar deep in are double wide popcorns at the 5D Theater). I mean, really, how many of you are going to take the class just so you can get this week’s fix of Dr. Oz? Although, in his defense, he is a genius (pretty sure Dr. House would beat him down, emotionally and physically). Boardrooms and educational facilities are where Code Space is going to thrive. The ability to share content from around the room and being able to multitask with others on a collaboration level is extremely useful, not to mention cool beans and the cat’s meow.

Side note: I would take that class (Just so I could see a Dr. Oz/House showdown).

Honestly though, I don’t think it would be that tough to learn how to use Kinect (yourself) as the “Universal Remote,” just using the gesture and voice controls last night were easy and required no thought at all to navigate to my desired functions. But that was only one specific device, not several devices working together to create a seamless user experience. I for one am excited to see where we’re going.

OK, now think, when was the last time you saw a TV without a remote? When was the last time you had to actually get up and turn everything on manually? When you find yourself changing channels with voice commands and gestures controls as you accidently break a lamp and slap your buddy on the couch next to you because you were too lazy to get off the sofa and do it yourself, go outside and hug a tree. Then, carry on living back in the future. I’ll be there waiting.