On Why We Don’t Have the Shiniest Toys Anymore

AV people used to have the coolest toys. Look back not that many years to the first AV touchpanels you saw; people oohed a bit, they ah-ed a bit and — if the installer soldered the nine-pin D-sub correctly — were impressed when the touch of one (soft) button not only turned on a room’s main display, but also switched inputs to the VCR and even popped up a set of transport controls. Yes, training was sometimes a chore and yes, users were sometimes afraid of the new technology. It also seemed special; it was a dramatic improvement over the table covered with remote controls they used at home.

AV people used to have the coolest toys. Look back not that many years to the first AV touchpanels you saw; people oohed a bit, they ah-ed a bit and — if the installer soldered the nine-pin D-sub correctly — were impressed when the touch of one (soft) button not only turned on a room’s main display, but also switched inputs to the VCR and even popped up a set of transport controls. Yes, training was sometimes a chore and yes, users were sometimes afraid of the new technology. It also seemed special; it was a dramatic improvement over the table covered with remote controls they used at home.

Then time passed. So many people have tablets and smartphones that I’ve actually heard users refer to a shiny new, sleek tabletop touchpanel as an iPad. Users accustomed to navigating apps were now comfortable selecting “dial VTC call” or “present laptop” on a touchscreen. For a time, we didn’t have the coolest toys anymore, just industrial, enterprise-level versions of the same toys. That was still pretty cool, but not quite as cool. The cutting edge seemed to have caught up to us in the professional AV world.

Today, I look at enterprise tools and see a great deal of advancement and quite a few ways to create overall experiences and ecosystems that set us apart from the consumer world. That’s a topic for another day, but there’s one thing that has lagged: the cool factor. The user experience. As the AV system slowly vanishes, it becomes easy for us to cling to the last scraps of the familiar. What do I mean? I’m thinking now about how mobile interfaces have changed, and our interfaces have, to the largest extent, not.

“Open the Pod Bay Doors, Hal” — Action-centric Interfaces

“Open the Pod Bay Doors, Hal” — Action-centric Interfaces

The biggest “cool factor” in UI is probably voice. As I left a meeting yesterday, I took my phone out of my pocket and said “OK Google, navigate home.” The phone obediently opened a map application, set directions to my house, and started reading them to me. Next: “OK Google, text Karine ‘Leaving now. Be back soon.'” My robotic servant read the message back to me and fired it off via an SMS app. The nifty part isn’t that it’s hands free (though that is nifty) but that it is task oriented rather than application oriented. We’re moving to a new way to interact with devices, in which we don’t choose to open a particular application, but ask it to accomplish a particular goal. Did I ask a general question? It will open a search app. Send an email? Mail app. Count down to when I should check that my dinner is done cooking? The countdown timer app. And so on.

In the professional world, I’ve yet to see someone walk into a conference room and say, “Display presentation. Call London Office.” I felt like Dave Bowman asking Hal to open the pod-bay doors. Fortunately, as of this writing, none of my devices have yet gone mad and tried to murder me.

[As an aside, I invoked the aforementioned murderous malfunctions when I learned that Rane had named its audio DSP platform “Hal.” The company’s answer was that the computer in Sir Arthur C. Clarke’s novel was the HAL9000, while its latest hardware device is the Hal4. We therefore should safely have 8,996 further hardware iterations before our DSP products start killing astronauts. I’m still concerned enough about symbolism that I’d think twice before installing one in a space-station. (Full disclosure: I am currently working on zero space-station projects.)]

Sharing is Caring — Content-Based Interface

What’s more interesting, and perhaps more subtle, is how tightly integrated mobile applications have become. I’m thinking in particular about the Android “Share” button that can expand to include various additional services as one adds them. When I installed a networked printer, I didn’t need to reprogram my phone; I ran the printer app and the printer now appears as an option for “Share to” for my already existing image viewing apps — the same way the Chromecast plugged into my TV, instant messenger programs, email or social networking.

So now in addition to the action-based approach of the voice command, we have a content-based approach in the share-button. One first selects content, and then chooses what to do with it. In fairness, wireless content sharing devices such as the Barco ClickShare or Crestron AirMedia can appear on this sharing menu. Not only are other aspects of professional AV systems not able to do so, but there’s also little of this kind of integration in PC desktop applications.

Hardware Based — The Dashboard

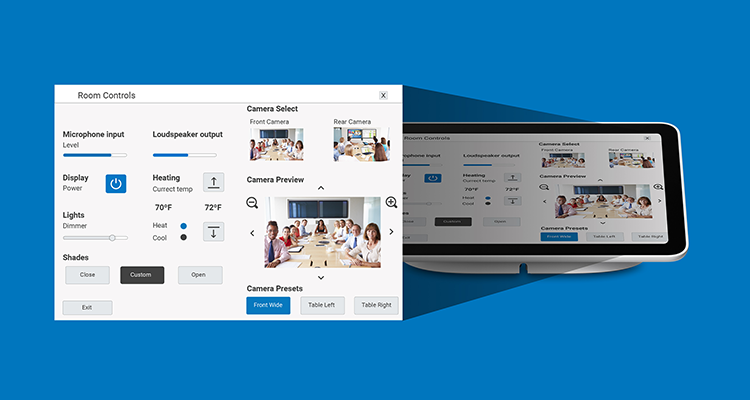

It’s pretty clear: For the most part, we don’t have all of the coolest toys anymore. I see many competent control GUI layouts which more-or-less follow the InfoComm Dash board for Controls standard. (Did you know that was a standard? InfoComm has standards and guidelines for quite a few things if you take time to look for them. It’s a great resource.) These designs are familiar and comfortable, but increasingly dated. Part of the reason is that we’ve always looked at things not in terms of “actions” or “content” as above, but in terms of devices; this made a great deal of sense when a purpose-built hardware appliance was required for each action. Play physical media? Select the VCR/DVD/Blu-ray player. Make a video call? Select the VTC appliance. Present your laptop? Select the correct switcher input. The idea and positioning of persistent controls (e.g., volume, environmental factors, etc.) with a central window featuring specific device controls was a very good one for its time, and still works in many applications. I wonder, however, if we let ourselves be a bit too far fixated on what is now a decade-old standard.

board for Controls standard. (Did you know that was a standard? InfoComm has standards and guidelines for quite a few things if you take time to look for them. It’s a great resource.) These designs are familiar and comfortable, but increasingly dated. Part of the reason is that we’ve always looked at things not in terms of “actions” or “content” as above, but in terms of devices; this made a great deal of sense when a purpose-built hardware appliance was required for each action. Play physical media? Select the VCR/DVD/Blu-ray player. Make a video call? Select the VTC appliance. Present your laptop? Select the correct switcher input. The idea and positioning of persistent controls (e.g., volume, environmental factors, etc.) with a central window featuring specific device controls was a very good one for its time, and still works in many applications. I wonder, however, if we let ourselves be a bit too far fixated on what is now a decade-old standard.

Today, “play media,” “make a call,” “record an event” and even “display mobile content” could ALL be PC-based applications. This renders the the center of the dashboard — the heart of a traditional AV control system — an empty splash page. Do you remember your first systems in which “video call” gave you dialing buttons plus camera control, “watch movie” gave you your DVD transport controls, and “display PC” gave you a sad little message that said “control your PC with the keyboard and mouse?” Today that “use keyboard and mouse” message might apply to video calls, media playback and pretty much anything else you’d do. We’re left with a “dashboard” which is a pretty (and functional) frame around what has increasingly become dead space.

Where Do We Go From Here?

Various professional manufacturers have been working on different tools that would make AV control not only “cool” again, but also modern and relevant. Harman has been selling NFC-enabled touchpanels for years now (since before they were branded as Harman). I’ve seen some use of this to personalize dialing directories and the like, but implementation is still far from mainstream. But it is, at least, a start.

Also of note is Crestron’s creation of an app to pair with its PinPoint beacons. It’s created something that knows where you are (via the magic of Bluetooth), can book the nearest meeting room for you and can not only activate the system when you walk in, but even pair you with a Crestron AirMedia device in the space. Thus far, this seems to be an all-Crestron experience. Having your meeting start when you walk in not only feels like a cool toy, but it also gets us away from an old paradigm.

It also, more to the point, echoes something that Alan Vezina said on these pages a few weeks ago: that AV programming is a traditionally closed set of ecosystems with very high barriers to entry. His own firm, Jydo, is based on Python, Javascript and HTML5. Crestron has moved to .NET and Harman to Java. HRC and Utelogy use HTML5. The AV world is starting to open. We just need to step through the door.

What I hope is that this will begin a shift away from “AV programmers” being primarily thought of as people who program AV systems, and more as general-purpose programmers whose work is in the AV industry. Let’s make the mobile versions of an AV touchpanel something more than a scaled-down copy of the wall-panel version; there are opportunities to use environmental, audio and location services.There’s a chance to stretch interactivity beyond “touch an icon, something happens.” There’s a chance to make some cool toys.